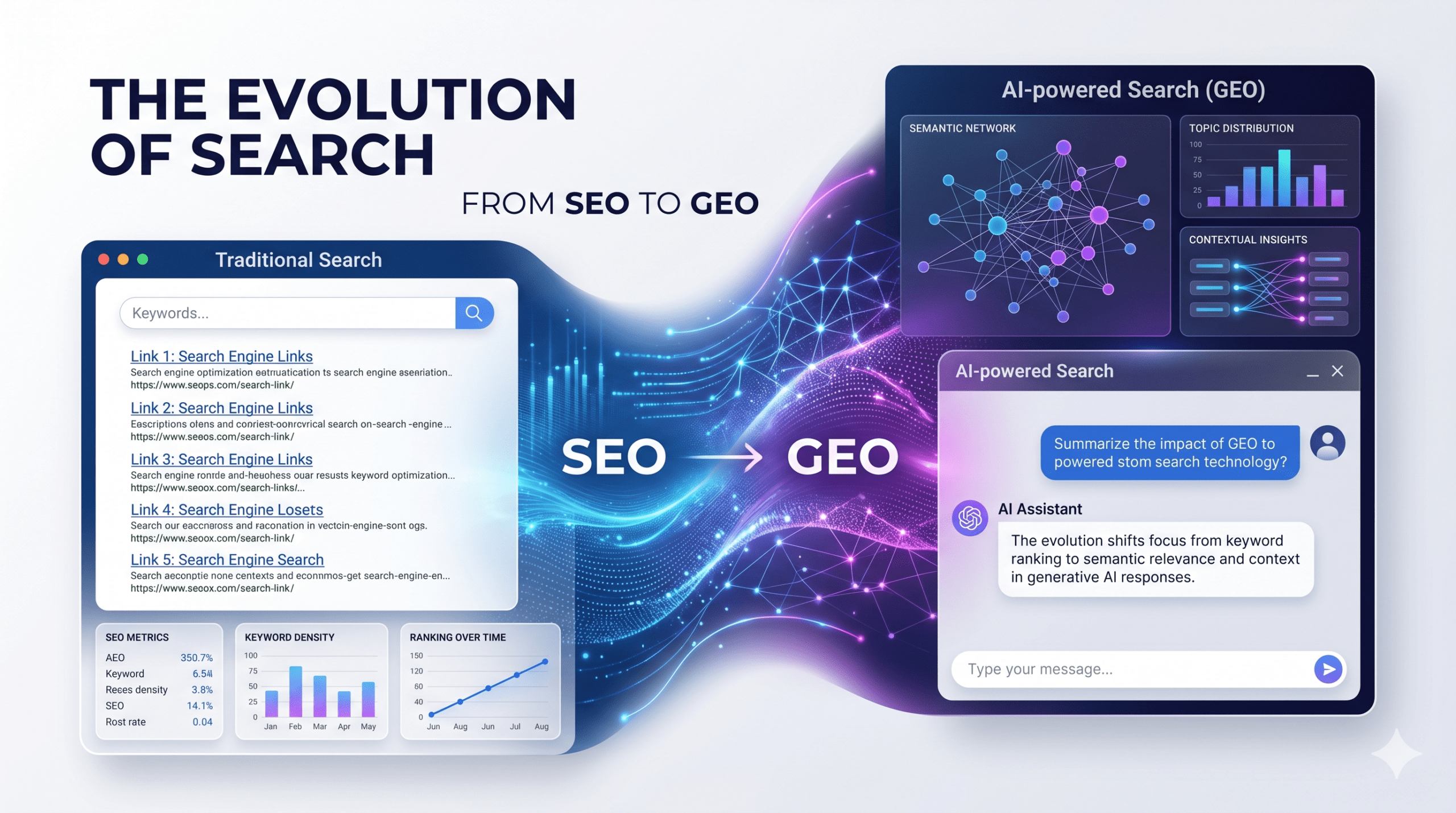

Generative search did not just alter search algorithms; it changed the fundamental relationship between users and information. Search engines no longer act as librarians handing out a list of sources. They act as synthesis engines delivering definitive answers. If your brand misses the synthesis, you effectively cease to exist for that user.

Quantifying your ai visibility is no longer a fringe experiment—it is the baseline for modern organic strategy. Traditional SEO measured success in clicks; generative optimization demands we measure success in citations and cognitive real estate. Here is how to measure your footprint and the engineering required to dominate generative outputs.

Core Metrics for Generative Search

Tracking AI outputs requires entirely new KPIs. Move beyond traditional ranking positions and evaluate these five critical indicators of generative dominance.

1. Response Inclusion (The Baseline Test) This metric asks a binary question: Does the model know you exist? If AI engines consistently ignore your brand, they either lack the training data or consider your entity irrelevant to the semantic cluster. A growing presence signals the algorithm trusts your content; a decline means competitors are feeding the model better-structured data.

- Actionable Step: Stop relying on Search Console for this. Query leading models (ChatGPT, Perplexity, Google AI Overviews) directly with non-branded, high-intent questions related to your niche. Track the exact frequency of your brand’s appearance over a 30-day rolling window to establish your baseline.

2. Generative Share of Voice (SOV) Traditional SOV measures keyword rankings. Generative SOV measures entity dominance. If an AI lists the top five solutions in your industry, are you listed first, fifth, or omitted entirely? AI systems heavily weigh consensus data; if your competitors dominate industry forums and third-party reviews, the AI adopts that consensus as fact.

- Actionable Step: Map your competitor citations across AI outputs. Identify the exact third-party sources the AI cites when generating answers about your competitors, and launch targeted digital PR campaigns to secure placements on those specific domains.

3. Contextual Relevance (The Weight of the Mention) Being named is insufficient if you are relegated to a footnote. Contextual relevance assesses your placement within the answer hierarchy. Does the AI position you as the definitive primary solution, or a secondary alternative?

- Actionable Step: Audit the surrounding adjectives AI assigns to your brand. Restructure your landing pages to explicitly pair your brand name with the exact use-case keywords and primary solutions you want the AI to learn and repeat.

4. Sentiment and Entity Positioning Large Language Models (LLMs) synthesize sentiment directly from Reddit, Quora, and review sites. A high frequency of mentions paired with hesitant or negative sentiment destroys trust instantly, as users read AI outputs as objective truth.

- Actionable Step: Aggressively manage off-page reputation on user-generated content (UGC) platforms. If you launch a new feature, flood community forums with high-quality, solved use-cases. LLMs scrape these boards aggressively to determine real-world brand perception.

5. Cross-Engine Consistency High visibility on one platform does not guarantee visibility on another. Perplexity favors real-time web indexing. ChatGPT relies heavily on its static training corpus and Bing index. Google prioritizes its Knowledge Graph.

- Actionable Step: Build a cross-platform testing matrix. Run your top 20 core queries across all major engines weekly. Discrepancies will reveal exactly which data feeds (real-time news, static knowledge bases, or structured schema) your brand currently lacks.

Engineering Your Generative Footprint

Stop writing for traditional crawlers. Start structuring data for context processors. Improving your ai visibility requires a shift from keyword density to information architecture.

- Target Seed Node Publications: AI models heavily weight information from high-authority domains (Wikipedia, major news outlets, top-tier industry journals) during training. Prioritize getting cited on these “seed nodes” rather than chasing low-tier guest posts.

- Inject BLUF Formatting: Bottom Line Up Front (BLUF). LLMs extract direct answers efficiently. Place the definitive, concise answer to a query in the first 50 words of a page, then use subsequent paragraphs to expand on the nuance.

- Deploy Strict Information Architecture: Use exhaustive JSON-LD schema markup. Clear formatting and logical flow are not just UX best practices; they are the literal map AI models use to connect entities to concepts confidently.

- Align Knowledge Graphs: Ensure your messaging is identical across your website, Wikidata, Google Knowledge Panel, and major business directories. Inconsistencies fracture AI confidence, dropping you out of the generated response.

Generative search rewards clarity, consensus, and authoritative structuring. Mastering this shift requires deploying targeted tracking tools to measure your generative footprint, adapting your content architecture, and securing your position as the definitive answer in your industry.