The era of ten blue links is ending. Generative engines like ChatGPT, Gemini, and Perplexity are actively intercepting users before they ever reach a traditional search engine results page (SERP).

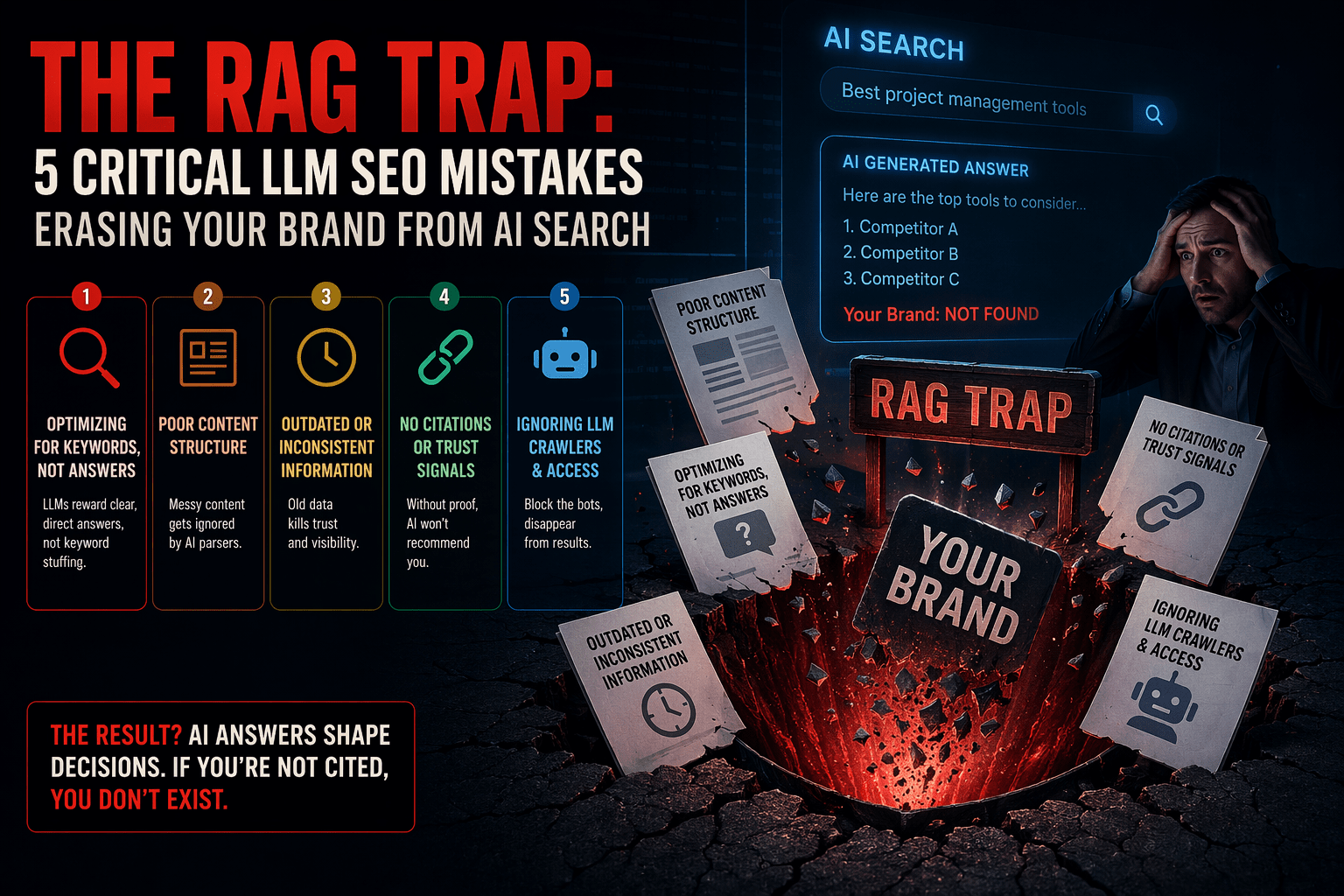

Many SEO professionals mistakenly believe that optimizing for large language models is a black-box impossibility. This is a dangerous misconception. LLMs do not magically generate facts; they rely on Retrieval-Augmented Generation (RAG) to pull real-time data from indexed web pages to formulate their answers. If your content is not structured specifically for these retrieval systems, your brand simply will not exist in the AI-generated consensus.

Effective llm seo requires a fundamental pivot: you are no longer optimizing solely for algorithms that rank links; you are structuring data for machines that synthesize answers.

Here are the five critical mistakes sabotaging your visibility in AI search, and the exact steps you need to fix them.

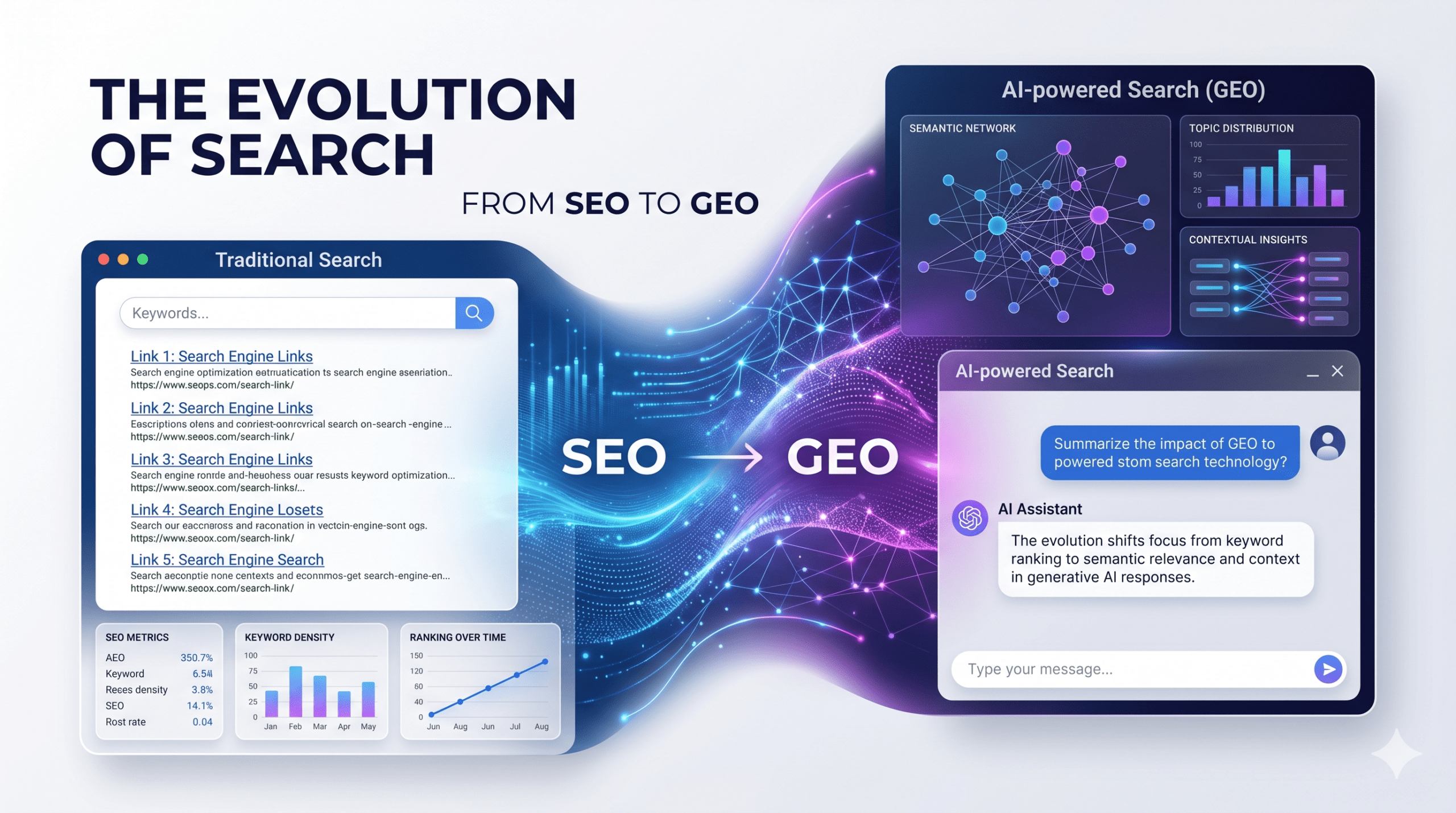

The New Rules of Engagement: What is LLM SEO?

Before dismantling the mistakes, we must redefine the baseline. LLM SEO is the practice of formatting, structuring, and connecting web content so that a machine can effortlessly parse, verify, and cite it within a generative response.

Traditional SEO acts like the Dewey Decimal System—helping a librarian locate a book. LLM SEO acts like preparing an expert witness for trial—feeding undeniable, clearly structured facts directly into the narrative.

5 Mistakes Sabotaging Your LLM SEO Performance

1. Treating AI Prompts Like Legacy Keywords

Searchers do not communicate with ChatGPT the way they do with Google. A traditional search query is fractured (“CRM software small business”). An AI prompt is a multi-layered, highly specific demand (“Compare the top three CRM platforms for a 50-person B2B sales team, highlighting pricing and Salesforce integration capabilities”).

Optimizing for isolated keywords ignores semantic intent. LLMs retrieve entire topical clusters to build comprehensive answers.

- The Fix: Stop tracking keywords and start mapping semantic relationships. Build out content that answers complex, multi-part questions within a single hub. Anticipate follow-up questions and structure your content to address the entire conversational thread, not just the entry point.

2. Ignoring the “Markdown Mandate” for Machine Readability

LLMs do not care about your CSS, your hero images, or your site speed. When a RAG system scrapes your page, it strips away the visual layer to read the raw text structure. If your semantic HTML is broken, or your data is buried in unformatted paragraphs, the AI will ignore it and move to a cleaner source.

- The Fix: Structure your content for direct machine ingestion.

- Use strict logical hierarchies (H1 > H2 > H3).

- Format comparative data exclusively in clean HTML tables.

- Implement an llm.txt file in your root directory to guide AI bots directly to your most critical, high-signal information.

3. Operating Outside the Knowledge Graph

LLMs are designed to avoid hallucinations. To do this, they heavily weight entities that are well-established in trusted knowledge graphs (like Google’s Knowledge Graph or Wikidata). If your brand, authors, or primary claims are not recognized entities backed by verified citations, AI models will not risk citing you.

- The Fix: Treat digital PR and technical markup as your primary trust signals.

- Deploy comprehensive Schema.org markup (specifically Organization, Person, and FAQPage).

- Cite original research and link directly to primary data sources. AI models trace claims back to their origin; ensure you are the origin, or at least intimately connected to it.

4. Neglecting the Long-Tail Conversational Context

Brands aggressively fight for short-head, high-volume terms while completely abandoning the long-tail conversational queries that dominate AI platforms. Because LLMs synthesize information, they excel at answering hyper-specific use cases. If your content lacks situational context, you lose visibility.

- The Fix: Audit your current content for “who, what, where, when, why, and how.” Inject contextually rich scenarios into your core pages. Do not just state what your product does; explain precisely who it is for, what specific problem it solves, and how it compares to alternatives under different conditions.

5. Assuming Static Authority in a Volatile Ecosystem

Traditional SEO moves in slow waves, marked by major algorithm updates. AI search models update continuously as their training data and retrieval mechanisms evolve. An answer that prominently features your brand on Perplexity on Tuesday might completely replace you with a competitor by Friday due to a slight shift in how the model weights recency or entity trust.

- The Fix: Establish a continuous monitoring loop. Track brand mentions and answer configurations across major LLMs using specialized AI tracking tools. When your citations drop, audit the new sources the AI is citing to reverse-engineer what specific data points or structures the model suddenly prefers.

Securing Your Position in the AI Consensus

The transition to AI-driven discovery is not a future possibility; it is the current reality. Brands that cling exclusively to traditional ranking metrics will find their traffic eroding as users bypass search engines for direct answers.

Mastering these optimizations requires technical precision. Partnering with specialized agencies like AdLift can streamline the process of structuring content, aligning entities, and monitoring AI citation velocity. By treating your website as a structured database ready for machine ingestion, you build a resilient content ecosystem that secures your authority across both legacy search engines and the generative AI platforms of tomorrow.